A few items have recently come to my attention that may be of interest to Perfect Health Diet readers.

First, my friend Chris Keller on Facebook reports that a new startup, Aperiomics, is offering tests that are capable of identifying 37,000 different infectious pathogens, including bacteria, viruses, fungi, and protozoa.

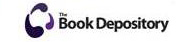

This is a game-changing diagnostic tool. Infections are one of the leading causes of disease (along with bad diet and lifestyle), yet standard medical practice is unable to diagnose most infections. Many infections are treatable, but it’s not easy to treat something you can’t diagnose. Getting a clear and accurate diagnosis of infections and treating them appropriately, along with healthy diet and lifestyle practices such as those recommended in Perfect Health Diet, holds the promise of curing most diseases.

The test is not cheap, Chris thinks it’s about $1000. But if you have a mysterious health problem, it may well be worth it.

******

Second, you may recall that five years ago, Dr. Hamilton Stapell of the Ancestral Health Society and Associate Professor of History at the State University of New York New Paltz organized a movement-wide survey. Results of that survey were published in the Journal of Evolution and Health.

Now Dr. Stapell has a follow-up survey. It aims to:

1) Describe how the size and composition of the ancestral health movement has changed over the past five years.

2) Identify common practices and the most important motivating factors for both starting and quitting a paleo lifestyle.

3) Predict the future trajectory of the ancestral health movement.

Please consider taking 3 to 5 minutes to help Dr. Stapell’s research by completing the Ancestral Health (Paleo) Survey 2018. All responses are anonymous and will be used for scholarly purposes only.

Recent Comments